The physics of agency

8 billion worlds. Three questions. The physics of why we get lost.

This is part 7 of The Stone series.

[Here’s Part 1, Part 2, Part 3, Part 4, Part 5, and Part 6.]

Would it shock you to know that we don’t live in the same world?

We share one planet, sure. But we don’t live in the same world. Over 8 billion realities occupy this shared planet of ours.

Each one shaped by culture, class, and experience.

Your “individual freedom” is my “collective responsibility.”

Your “hard work” is my “rigged system.”

Your “harmless joke” is my “lifelong trauma.”

These aren’t opinions. They’re complete worldviews—with their own rules, truths, and values.

For most of human history, our fractured reality wasn’t a crisis. Different ‘realities’ stayed separated. A peasant in medieval France never debated theology with a Turkish merchant. Even two American neighbors in 1953 could live in different worlds without ever colliding.

When worldviews did meet, they had time. A religious argument might take generations to become a war. A class divide simmered for centuries before revolution kicked in. Distance, ignorance, and common enemies kept competing realities from colliding faster than people could adapt.

Then technology. From printing presses to personal computers, every buffer got removed. Town halls became algorithm-fed groups. Local newspapers became targeted feeds. The water cooler became Slack channels with the same disgruntled workers.

The speed of conflict isn’t just faster. It’s different. And it’s breaking everything.

…

Posts 4–6 built a machine that works. Potential occurs at boundaries. A conversion pattern. Four kinds of work.

But all of that assumed an honest machine. One who reads the world accurately. And one that updates when reality pushes back, then routes energy where it’s actually needed.

What happens when the machine can think?

What happens when it builds a picture of the world and defends that picture against correction? When it imagines futures that don’t exist yet and steers toward them, even when the terrain has shifted underneath?

That’s the fourth piece. The navigator is the layer that builds the map, chooses the destination, and allocates the fuel.

Three questions

Before we unpack any of this, let me share three questions with you first. They sound simple. They are simple. And they form the complete diagnostic for why conscious systems—people, teams, organizations, civilizations—fail to convert potential they can see.

What’s real? Does your picture of the world match the world?

What matters? Even if you see clearly, which direction do you steer?

What do you actually fund? Not what you say matters. Where the energy actually goes.

Every failure to make real change traces back to one of these three.

Wrong picture: you navigate by a map that doesn’t match the territory.

Wrong direction: you see clearly but walk toward something that doesn’t serve you.

Wrong allocation: you point one way and resource another, then tell yourself a story about why it all makes sense. Do you put your money where your mouth is?

These aren’t rhetorical questions. Each one describes a measurable gap—and each gap delivers consequences whether or not the navigator notices.

The shared physics between animals and humans

These questions aren’t unique to humans. The first two exist in primitive form wherever there’s sensing and choosing. A gazelle builds a model of where the lion stands, the more primitive version of “what’s real?” A wolf directs its pack toward prey rather than berries—a primitive version of “what matters?”

But notice what animals can’t do. The gazelle can’t defend its model against correction. New data arrives, the picture updates immediately. There’s no committee meeting. No identity crisis. The wolf doesn’t deliberate between hunting and composing symphonies. “What matters?” contains one entry, and evolution wrote it.

Humans broke both constraints. We build pictures of reality and defend them against correction. We generate dozens of answers to “what matters?” and pick the ones that feel right.

Which brings us to the third question, the one that doesn’t exist in nature at all.

A bird can’t say “my priority is vigilance” while spending every calorie on nest-building. It just does what it does. There’s no story running alongside the action. Only humans do that. Only we can look at what we’re doing, know it doesn’t match what we say matters, and tell ourselves it all makes sense anyway.

That’s uniquely human. And the crucial enabler wasn’t consciousness alone. It was consciousness plus surplus. Without surplus, even humans can’t maintain this narrative gap with reality because misalignment kills you by sundown.

Surplus extends the runway. Consciousness fills it with stories.

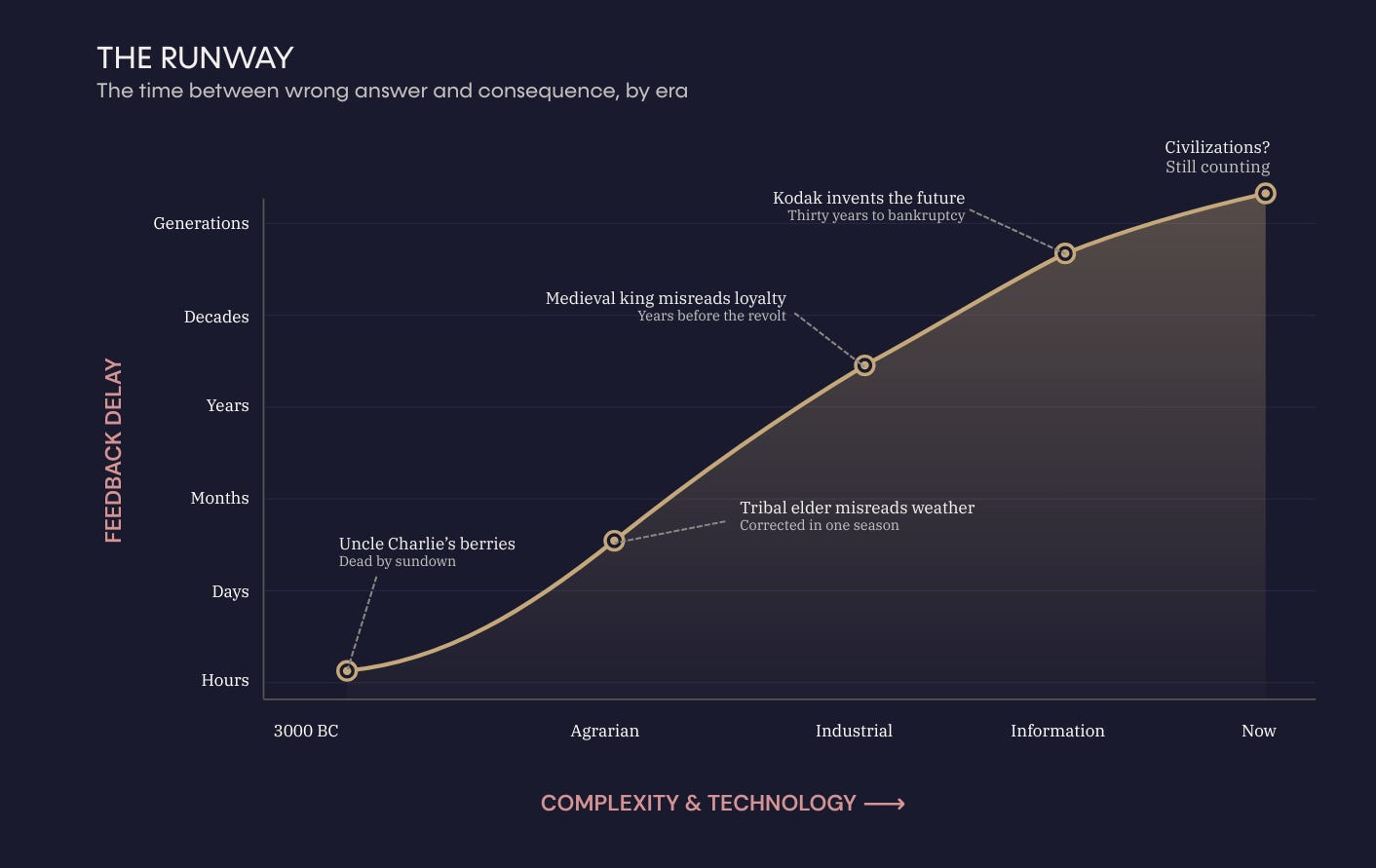

In 3000 BC, “what’s real?” meant don’t eat the berries that killed Uncle Charlie. The feedback arrived in hours. Why build an elaborate story about how the berries were actually fine when they weren’t?

“What matters?” didn’t require deliberation—survival answered it. “What do you fund?” answered itself. You funded whatever kept you alive.

The questions are always there, running in the background. But the gap between getting them wrong and paying for it was almost zero. Eat the wrong berry, you’re dead by sundown. No time to build a story about why the berry was fine.

Then the world grew more complex. Survival grew more assured. And something shifted: you could get these questions wrong and not feel it right away. Days grew to hours. Years instead of days. Wrong answers got more runway, and more runway meant more time for stories to fill the gap.

Now let’s take the questions one at a time and watch what happens when that gap stretches from days to decades.

What’s real?

The CEO sees “necessary restructuring.” Employees see “broken promises.”

HR sees “policy compliance.” Workers see “soul-crushing bureaucracy.”

Middle managers see “maintaining standards.” Their teams see “micromanagement.”

Same building. Same company. Same quarter. Five pictures of the same territory. None of them is lying.

Each person navigates by a model of reality. Not reality itself—a model. The model says: here’s where we are, here’s what’s happening, here’s what needs to change. And every model diverges from the territory it claims to describe.

Physics calls this the model-reality gap—the distance between the internal picture and the actual territory. Every conscious system carries one.

The question isn’t whether the gap exists. It always does. The question asks how fast it closes when reality pushes back.

Physics notebook: The model-reality gap (ΔΦ) measures the distance between what the system believes and what the territory actually contains.

ΔΦ = 0: Perfect map

ΔΦ ⟶ 1: Complete disconnection

Why do models diverge? Not because people are stupid or dishonest. Because models get built from experience. Past data. Filtered through what you’ve seen work and fail. Also shaped by what you want to be true.

The CEO’s model is built from board conversations, strategy decks, and a view from the top that doesn’t include what the mailroom looks like at 6 pm. The frontline worker’s model is built from missed paychecks, moving targets, and the distance between what leadership says and what actually changes on Wednesday morning.

Both pictures contain real data. Both miss lots. And the gap between each model and the actual territory opens silently. And there’s no pop-up saying: “Warning! Your model of reality now diverges 40% from actual conditions.”

A tribal elder who misread the weather got corrected in one season. A medieval king who misread his kingdom’s loyalty might survive for years before the revolt. A modern CEO who misreads the market might run on the wrong model for a decade before the company collapses. It’s still the same question. The feedback loop now stretches from hours to years to decades.

…

A manager sends a policy memo. She intends to have clearer boundaries, fewer gray areas, and make everyone safer. One team reads it that way. Another team reads it as a threat. It’s stripped autonomy under the banner of “support.”

Same memo. Same words. Two worlds. She didn’t collide with reality. She collided with a different model of reality. And neither picture checked itself against the territory. Her model said, "I’m helping." Their model said, “You’re controlling. ”

…

The gap doesn’t cause the crisis. Gaps between models and reality always exist. Your map of the world will never perfectly match the world. That’s fine.

The crisis arrives when the gap can’t close. When new information shows up and the model doesn’t update. Or updates in the wrong direction. Or when the system defends the picture against the update.

Physics calls this correction rate—how fast the model updates when reality contradicts it.

When correction runs high, incorrect models are corrected quickly.

When correction drops toward zero, the gap compounds.

And when correction goes negative—when contradictory evidence actually strengthens the wrong picture—you’ve entered territory that explains everything from Kodak to cults.

Physics notebook: Correction rate (λ) measures how fast the model updates.

λ ﹥ 0: self-correcting

λ = 0: frozen

λ ﹤ 0: contradiction strengthens the wrong picture

What happened to our Kodak moment?

Kodak invented the digital camera in 1975! The company that would be destroyed by digital photography created digital photography. They held the future in their hands for three decades.

They saw the data. Every year, more data. Their model of the world said: film is our business. The data said: film is ending. The model didn’t update. It defended itself. Year after year after year.

That’s a model that became load-bearing. Too much identity, too much infrastructure, too much “this is who we are” built on top of a map that loudly stopped matching the territory. The cost of updating the picture felt larger than the cost of keeping it. Until physics delivered a bill, the old story couldn’t absorb.

Uncle Charlie’s berries killed one person in an afternoon. Kodak’s wrong picture destroyed a company over the course of 30 years. The same question—“what’s real?”—with the feedback loop stretched from hours to decades.

…

This separates vision from delusion.

Both involve a picture that reaches beyond current conditions. The entrepreneur who sees a market before it forms—that’s vision. The executive who sees a strategy the market has already abandoned—delusion. Same cognitive machinery. Same capacity to model futures that don’t exist yet.

The only difference: coupling. How tightly the model stays tethered to the environment.

Vision maintains coupling. It checks back. It updates when the territory pushes back. It pays a truth tax in small, frequent installments.

Delusion decouples. It defends the picture against correction. The truth tax accrues silently. And the longer it accrues, the larger the bill when physics finally comes to collect.

But accurate models don’t guarantee we'll thrive. You can see reality with perfect clarity and steer straight toward extraction, comfort, or short-term gain. You can read the territory perfectly and still walk in the wrong direction.

Knowing what’s real doesn’t tell you which way to walk. That’s the second question.

What matters?

A river doesn’t choose its course. A cell doesn’t deliberate about which boundary to cross. But we do. Every day. Which boundary to approach? Which crossing to attempt? Which potential to pursue?

Physics calls this capacity imagination magnitude—the span of futures the system can represent beyond what the environment signals. Not good imagination. Not accurate imagination. Large imagination. The range of destinations the navigator can point toward.

Physics notebook: Imagination magnitude (m) measures the span of futures the system can represent beyond what the environment signals. Not good imagination. Not accurate imagination. Large imagination.

m = 0: the system only responds to present signals

m ⟶ ∞: the system can model arbitrarily distant futures

m capacity explains why humans build cathedrals that take generations to complete. Plant trees they’ll never sit under. Write constitutions for nations that semi-exist. It also explains why people wage holy wars, build empires on extraction, and destroy the commons while pontificating stewardship. Same machinery. Same capacity. Different coupling.

Remember: the same mechanism that enables reaching beyond signals guarantees reaching beyond reality.

In other words, the same machinery that lets you imagine a better future lets you imagine a fake one. And it can't always tell the difference.

m and identity

This gets dangerous when the answer to “what matters?” fuses with identity—who I am, what I stand for, what my life means. The system stops asking whether the picture matches the territory. It starts defending the picture.

Your kid wants to be a musician. They’re good. But you spent twenty years picturing them in medicine. That picture isn’t a preference—it’s load-bearing. Meaning: other things are built on top of it. Your identity, your sacrifices, your picture of what "safe" looks like for your child—all of it rests on that one image. Pull it out and everything above it shifts.

So the kid plays a show. You attend, but you mention the MCAT over dinner (medical admissions test). They share a new recording and you respond with an article about AI replacing creative work. You’re not being cruel. You’re navigating by a picture that fused with your identity so completely that updating it feels like losing yourself.

Eventually, the relationship erodes from the tax of steering by a picture the kid stopped matching years ago. Same physics as Kodak. A model that became load-bearing became a correction that felt more expensive than the gap. Until that gap delivers consequences the model can’t ignore.

The technological accelerant

For most of human history, different answers to “what matters?” had time. Time to coexist. Time to negotiate. Time to find accommodation. Geography kept them apart. Ignorance buffered the collisions. Common enemies forced temporary alignment. You could hold a completely different picture of what mattered from the village across the valley, and it might never become a problem in your lifetime.

Technology compressed all of that. Town halls became algorithm-fed groups. Local disagreements became global firestorms. Different worldviews don’t slowly negotiate anymore—they slam together at digital speed, with consequences that ripple through entire systems before anyone can adapt.

And what if better communication actually makes things worse? More transparency doesn’t close the gap between different models of what matters.

The gap might not be about information at all. People aren’t just looking at different data. They’re steering toward different destinations.

Give the CEO and the frontline worker the same dashboard—same numbers, same data—and they’ll probably steer in opposite directions. Because “what matters” diverges even when “what’s real” converges.

Seeing reality accurately isn’t enough. You also need a direction worth steering toward. Two questions answered. But the third catches the system lying to itself.

What do you actually fund?

Surplus didn’t just crack open “what matters?” It created something more dangerous: the possibility of saying one thing and funding another. When ‘misalignment’ kills you by sundown, you can’t sustain any kind of model-reality gap.

When you have surplus, when you can survive longer with less risk, the model-reality gap can persist for months or years. An entire career. Generations, even.

Post 6 built something the reader might not have fully absorbed yet: being and doing couple as one reality.

What the system does and what the system is don’t separate into two things, they describe one thing from two angles. The whirlpool’s shape and its motion don’t separate. The motion is the shape.

So follow where energy is spent. It reveals what the system actually commits to.

In other words, don’t read the brochure. Read the budget.

…

Maybe a company says it values innovation, but rewards people who maintain the status quo. Look at the promotions. Look at who gets resourced.

But what happens to the person who actually disrupts something—do they get celebrated, or managed out?

The person who says growth matters but spends every evening in the same routine, same social feeds, same conversations, same comfortable loop. The machinery routes energy toward what it’s actually optimized for—and comfort is a powerful optimizer. The stated direction says growth. The energy flow says chilll.

The city that names sustainability as a priority and then resources extraction at ten times the rate. Read the budget. The budget never lies.

In each case, the stated answer to “what matters?” diverges from the revealed answer. It’s not hypocrisy in the moral sense. Most people aren’t being dishonest on purpose. It’s a simple navigation failure. The system modeled one direction, resourced another, and then told itself a story that reconciled the contradiction.

The story works, right up until physics delivers consequences the story can’t absorb.

The system works flawlessly, too—and that’s the problem. The machinery never breaks. It produces results consistent with its actual configuration, not its stated intentions. Always.

The truth tax

Every misalignment between “what you say” and “what you fund” compounds. At the very least, it’s energy spent navigating by a map that doesn’t match the territory. Talk is cheap, right? Resource flows tell you what really matters.

We’ll call it the truth tax. Uncle Charlie paid it instantly—poisoned by eating the wrong berries, dead by sundown. Kodak paid for it for over 30 years, the company killer ironically sitting in its own labs. The parent pays for it in the growing distance at every holiday dinner.

The tax doesn’t disappear because the feedback loop lengthened. It compounds. And when divergence grows faster than correction can close it, you get what the physics calls a Lambda Crisis, when a system’s map of reality diverges faster than it can correct.

The fixable problem became unfixable while everyone navigated by a model that said it was already fixed.

Quick application

It’s late. You’re alone with the thing you keep postponing.

There’s a call you need to make. A form you need to submit. A conversation you need to start. One action that would force a new chapter.

You don’t do it.

Instead, you clean the kitchen. You watch one more episode of the Desperate Husbands of Orange County. You answer low-stakes emails. You do “productive” things that don’t change your life.

Now the three questions:

What’s real? You know exactly what you need to do. You’re not confused.

What matters? You want the change. You’ve wanted it for months.

What gets funded? Desperate husbands. Not the change. The old routine. You fund the avoidance.

Three answers, and that’s just one person. Imagine a family, community, company, or city.

Remember, humans can run two maps at once—reality and story—and the story can win the budget. The machinery isn’t broken. The navigator is fully operational.

The fourth piece

Three questions. Three failure modes.

Now let’s add one more thing to the pot: conscious systems can get all three wrong at once and still tell a perfectly coherent story about why they’re right.

In other words, you can be wrong about what’s real, wrong about what matters, and wrong about what you’re funding—and still feel completely justified.

Step back. Now you can see why three pieces weren’t enough.

Potential tells you where the raw material comes from—but it can’t make you act on it.

The pattern tells you how change unfolds—but it can’t stop you from quitting halfway.

SIRF does the work—but it doesn’t decide which doors you walk up to.

The navigator chooses the doors—but it can’t guarantee its map matches the world.

Each piece works. The physics holds. But, altogether, they explain why thriving remains rare.

Thriving requires all four to line up at once: an opportunity you can actually reach, enough capacity to cross, a conversion sequence that runs to completion, and a navigator that sees clearly and responds quickly—reality, direction, and budget all aligned.

Miss any one and you still get an outcome. You will succeed at something.

Systems always produce results consistent with what they’re set up to do. It just gets aimed—often by a narrator—at something other than optimal results.

Thriving stays the anomaly because four independent requirements have to align, and any one of them can fail on its own.

And the historical trend makes it worse. Complexity keeps rising. Technology stretches feedback loops. Civilization keeps lengthening the gap between getting the three questions wrong and feeling the consequences. Delusion gets more runway. The truth bill gets bigger.

That’s a legible diagnosis. And once a diagnosis is readable, it becomes addressable, even for ultra-complex systems.

One more to go

Four pieces. One machine.

We’ve described each physics piece in isolation—potential, then process, then converter, then navigator. Like learning the parts of an engine one at a time.

What happens when you run them together? When the navigator steers the converter through the pattern toward potential the source provides?

That’s a synthesis. The thing the alchemists were looking for.

Next: The synthesis

Where the science stands

Established science: The capacity for internal models (predictive processing—Friston, Clark). Model-reality gaps (cognitive dissonance—Festinger). Conscious systems navigate by representations, not direct access to reality. Confidence: ~95%.

This series’ synthesis: The three questions (ΔΦ, m, and resource allocation) form a complete diagnostic for navigation failure. Vision and delusion run on identical machinery separated only by how tightly the model stays coupled to reality. The truth tax compounds with feedback delay, and that compound interest explains why civilizations face larger crises than individuals facing the same questions. When divergence outpaces correction (λ), collapse becomes structural. Agency completes a four-piece architecture of transformation. Confidence: 60–80%. Under test.

What would break it: Systems with accurate pictures, aligned direction, and matched allocation that don’t show higher conversion rates than systems failing on one or more. If the three questions don’t function as independent failure modes. If vision-delusion can’t be distinguished by coupling strength.