The civilizational splat

A thousand different reasons it *is* your fault—and why they’re almost all wrong

This post is part of a series exploring a physics-based hypothesis about how potential actually works—where it comes from, why it appears and disappears, and what changes when you look at it differently. The claims here are testable and falsifiable. If the evidence breaks them, we'll report that too. The formal hypothesis is published as a preprint.

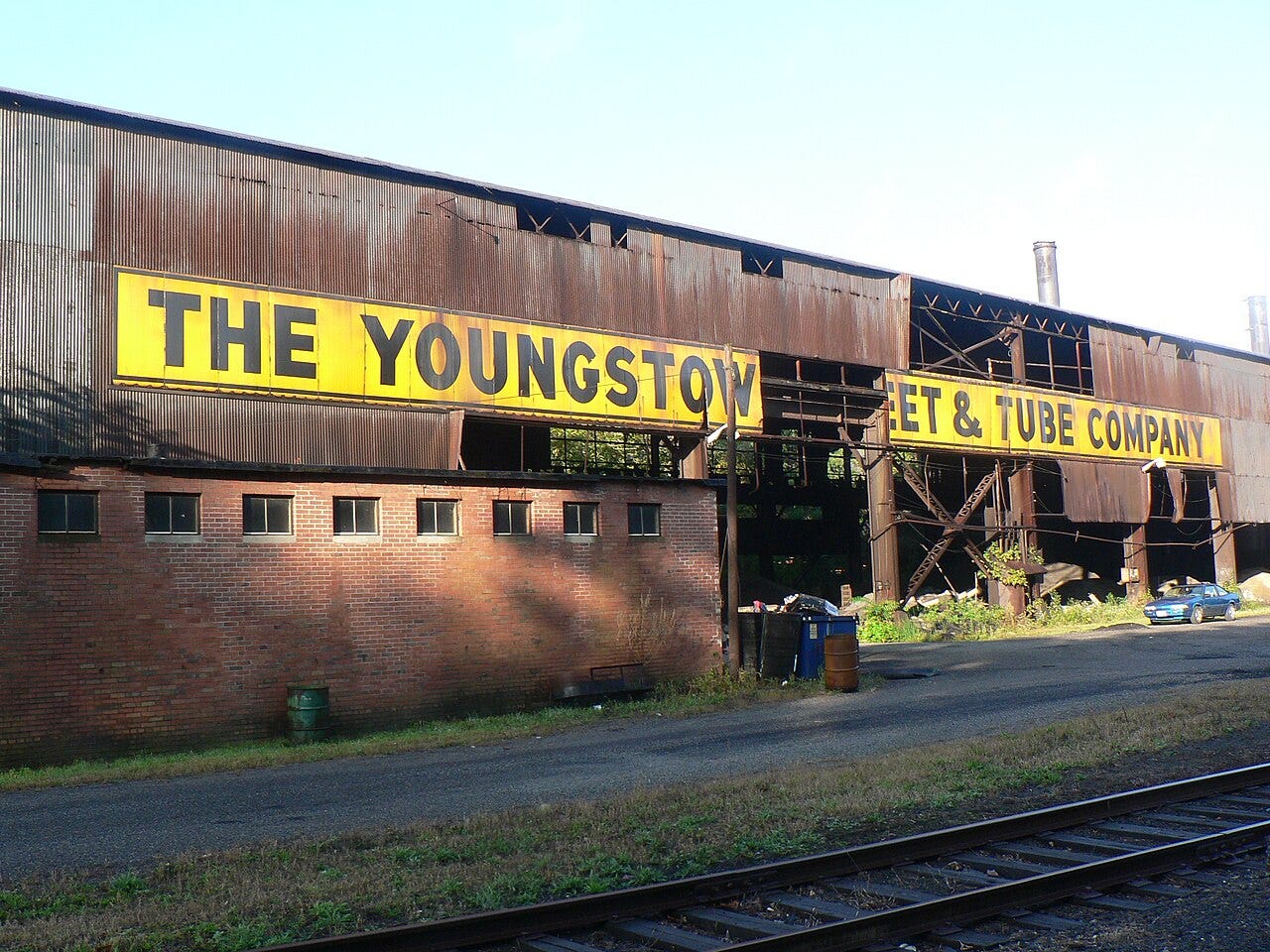

September 19, 1977. A date still known in Youngstown, Ohio, as “Black Monday.”

That afternoon, the corporate owners of Youngstown Sheet and Tube shut the doors of the Campbell Works. Five thousand people lost their livelihoods in a single announcement. Over the next decade, the tidal wave wiped out 40,000 manufacturing jobs across the Mahoning Valley and stripped up to 75 percent of the city’s school tax revenues.

What followed arrived with a thousand different names.

Opioid addiction climbed. That was a drug crisis—individuals making destructive choices. Marriages collapsed. That was a values problem—individual people failing their commitments. Kids stopped performing in school. That was a motivation problem—individual students who stopped trying.

Then crime rose. That was a law enforcement problem—individual actors choosing the wrong path. Civic institutions decayed. That was apathy—individual citizens who stopped caring. Life expectancy dropped. That was a healthcare problem—individual bodies failing.

A thousand different problems. A thousand different explanations. A thousand different experts from a thousand different fields—each with a plausible story about their piece. And every one of those stories pointed at the same place: the individual.

…

So what was it? Seven different explanations. Seven different fields. Seven different experts. Same word every time. Individual. Individual choice. Individual failure. Individual character. Individual bodies. Nobody questioned it. It’s so obvious, right?

But by the time each explanation landed, the deeper question had already disappeared:

Why did seven different domains break in the same place, at the same time, in the same direction?

…

I want to be clear. Diagnosing the individual isn’t wrong. It’s sufficient. It’s expedient. But that sufficiency and expediency keep the deeper pattern hidden.

Each of those diagnoses is locally true. The opioid user did make a choice. The student did stop trying. The marriage did fail. That person did commit a crime.

But each true diagnosis closes a case—and a closed case is a question that stops getting asked. Close a thousand cases, one at a time, each for a good reason, and you never ask the only question that matters.

…

So what do we do with something nobody can see?

We need a distinction that doesn’t exist yet.

The two splats

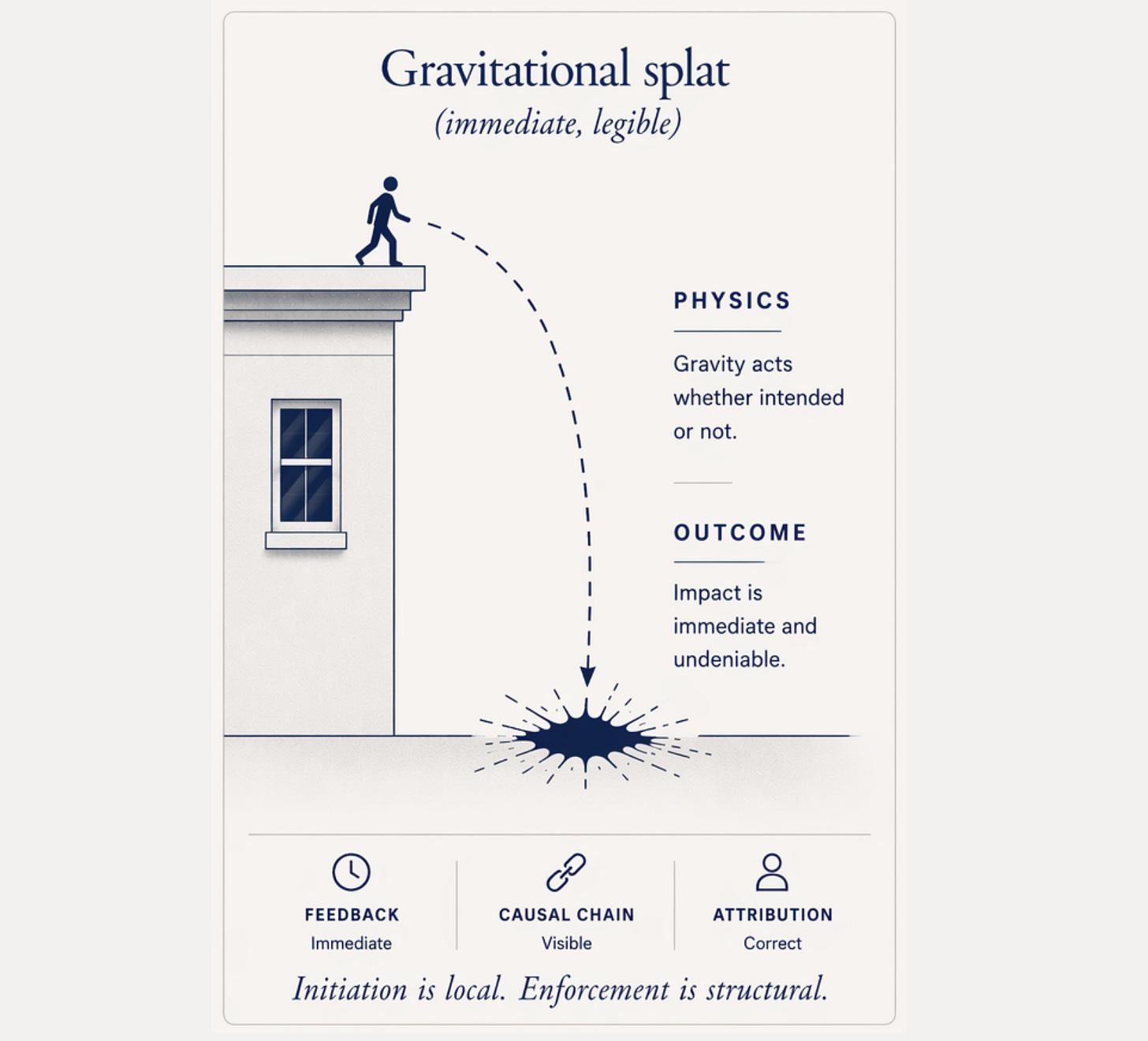

Nobody stands on their roof and flaps their arms hoping to fly. We figured that one out early. The feedback’s immediate—you jump, you land, you learn. The cause is visible. You get instant consequences, and nobody tries twice—we hope.

Call that a gravitational splat. The physics announces itself on impact.

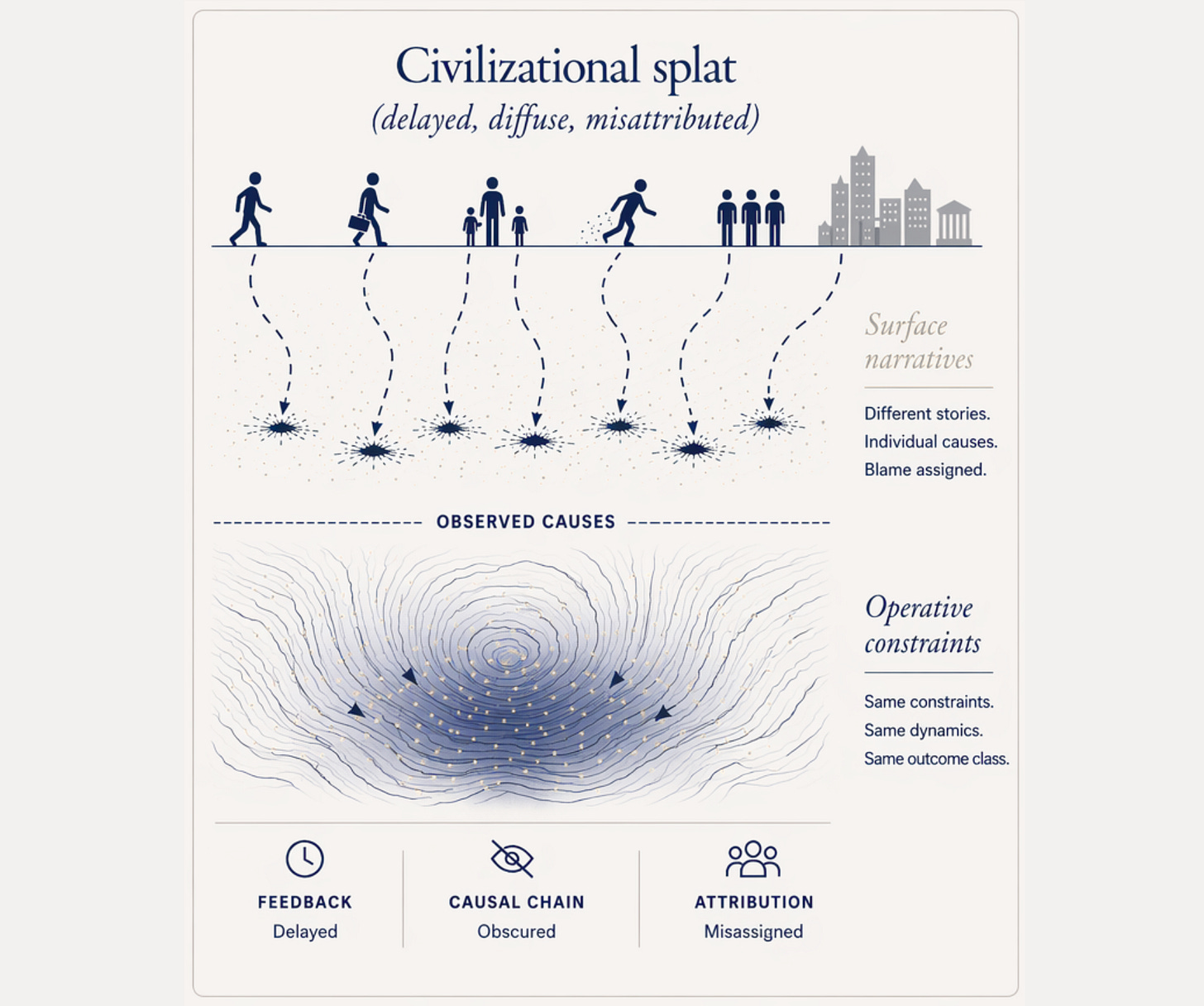

But what happens when the same physics runs at a scale where the fall takes decades? Where the cause of a splat remains invisible? Where a thousand people hit the ground one at a time, each with their own story about how they tripped?

Nobody sees the fall. They see the landing. It’s a drug problem, a values crisis, a motivation gap, or a health issue. Each splat is visible. Each one diagnosable. But the arc that produced it—the slow collapse of conditions that started years before the landing—that part was never in the frame.

Youngstown is a civilizational splat. Not a thousand people making a thousand individual mistakes. A thousand people stepping off an edge nobody can see, diagnosed one at a time on the way down, while the structure that pushed them stays unnamed.

The difference between a gravitational splat and a civilizational splat isn’t the physics—it’s timescale.

At roof-height, the feedback corrects the model—you jump, you splat, you learn, then, hopefully, you stop jumping.

At decade-scale, the feedback diffuses—it arrives slowly, wears the clothes of personal failure, ‘bad decisions’, and generates its own explanations faster than anyone can trace the cause. By the time the consequences land, a thousand experts in a thousand fields have already closed a thousand cases.

The pattern never becomes visible because every person is like a shard of a glass that fell from a table—each one gets their own diagnosis, their own narrative, their own case file. Nobody bothers to look at the table.

And the responses are opposite. A gravitational splat teaches—don’t jump. We do PSAs, “Just say no to roof jumping,” and do anti-flying-off-roof campaigns.

A civilizational splat doesn’t look like physics. It looks like folk wisdom. “It’s a dog-eat-dog world1,” and “Most people don’t make it,” and “Life’s hard.”

Each sounds like clear-eyed realism. Each one confirms the model that produced the fall.

That confirmation is the splat. It’s the sound the wrong model makes when it hits the ground so slowly that it doesn’t make a sound.

…

So if Youngstown was a splat, what fell? What was the thing that held everything together before it shattered?

What a factory actually is

Your first instinct is probably simpler than all of this. “They lost their paychecks. Of course everything fell apart.”

But some of them found new paychecks—and the town kept collapsing. So ask the next question: what didn’t they find? What was missing that the new paycheck couldn’t replace?

Think about what a factory actually is. Not economically—physically. What does it produce, day after day, beyond steel?

Nobody designed Youngstown Sheet and Tube to do anything except make steel. But making steel required a specific configuration of people—different skills, working together, daily, on shared problems, across decades of continuity. The task demanded the configuration. And that configuration, as an unintended byproduct, opened futures that no individual inside it could have opened alone.

The apprentice who spent three years alongside the veteran machinist didn’t just learn a technique. A possibility opened for him—a future as a skilled tradesman, a provider, a person with standing in his community—that was closed before that encounter and open after it.

Each encounter left a deposit: knowledge transferred, trust established, a capability that crossed the boundary for the first time. Those deposits, repeated daily across decades, shifted what people could do and who they could become.

Not because anyone unlocked something inside them. Because the encounters kept putting new possibilities on the table, and the continuity kept them there long enough to take hold.

The paycheck matters. I’m not dismissing it. But the paycheck rides on top of something else: a set of specific, describable conditions between people. Do they see each other regularly, or rarely? Do they bring different skills and perspectives, or the same ones? Does the contact last long enough for trust to build? Are they working on shared problems, or passing like strangers? Does what one person knows actually reach the other?

That’s encounter architecture—the conditions that make contact between people actually do something. Nobody at the mill had that vocabulary. They didn’t need it. The task demanded the configuration, and the configuration did the work.

…

When the factory closed, the paychecks stopped—and everyone noticed (the obvious splat). But the encounter architecture collapsed too—and nobody noticed. Because nobody knew it was there. It was a byproduct of making steel. It wasn’t on anyone’s balance sheet. It didn’t have a name.

You can’t photograph encounter architecture. It’s structural. But you can photograph the union hall with the lights off. The empty parking lot at shift change. The Little League field with no game scheduled. The church pew with gaps where families sat for thirty years.

And you can’t photograph unrealized potential—the futures that closed when the encounters stopped. The kid who would have learned from the machinist who would have mentored him, and generations of kids affected after that. What happens if the marriage holds together because the community around it remains intact? None of that shows up in any dataset, because none of it ever happened.

What shows up instead are a thousand loud symptoms. What could have been goes where?

When encounter architecture collapses, the futures it was opening slam shut. But the drive to emerge doesn’t stop. People still need to learn, to trust, to belong, to build.

The encounters where knowledge was transferred are gone. The continuity that built trust dissolved overnight. The structure that held identity in place disappeared. And the drive that would have moved toward newly opened futures routes through whatever’s still available—and in an encounter-starved field, what’s still available tends to require nothing but a threshold low enough to cross.

The opioid isn’t a choice made in a vacuum. It’s what a person reaches for when the encounters that would have opened generative futures no longer exist.

…

This isn’t just Youngstown. It’s Gary. It’s Janesville. It’s every post-industrial town where a thousand “different” problems arrived for “different” reasons—and every one traces to the same collapse. The same variable, degrading the same way, producing the same downstream pattern, is diagnosed a thousand different ways.

None of this means people don’t matter. Every person in Youngstown still made choices, still carried responsibility, still exercised agency. But they exercised it inside conditions they didn’t create alone. The conditions shape what agency has to work with. They don’t erase it.

Two predictions

Now, someone will read the above and say, “Duh. It was economic infrastructure. They went to work. They earned a paycheck. Everything else was secondary.”

Fair enough. Let’s test it. Two models. Two predictions. One dataset. You tell me which one matches.

If the paycheck is the root variable, the damage should concentrate in financial indicators—debt, housing, consumption. It should scale with income loss and reverse with income replacement. Federal aid and new employment should stop the bleeding. And the damage should stay with the people who actually lost the income.

If encounter architecture is the root variable, the damage should migrate across domains that have nothing to do with money. Neonatal health. Marital stability. School performance. Civic participation. Life expectancy. Recovery shouldn’t track income replacement. Aid shouldn’t reverse the decline. And the damage should radiate outward into people who never worked at the mill—their kids, their neighbors, the next generation.

Youngstown received significant federal and state aid. Some workers found other jobs. Income partially recovered in many households.

The town kept collapsing. Marriages kept dissolving. Kids kept underperforming. Opioid use kept climbing. Civic participation kept falling. Life expectancy kept dropping. The damage crossed every domain boundary, reached people who had never set foot in the mill, and persisted long after the paychecks were partially replaced.

That’s not a financial crisis. The income loss was real, and replacing it was necessary, but it wasn’t sufficient. A financial crisis stays in the financial domain. What Youngstown produced was a thousand different crises in a thousand different domains—which is exactly what you’d predict if the thing that was destroyed was not the paycheck but the encounter architecture underneath it.

The paycheck was one downstream product of that architecture. So was the marriage stability. So was the school performance. So was the civic life. Destroy the architecture that generated all of them, and all of them fail—each with its own name, each with its own expert, none of them obviously connected.

Only one of those two models predicts the actual shape of the damage.

Janesville

In December 2008, General Motors shuttered its assembly plant in Janesville, Wisconsin. The oldest operating GM plant in the country. Over 7,000 workers at its peak. 4.8 million square feet of silent concrete.

When one person struggles with addiction, we call it a tragic mistake. Individual choice. Individual failure.

Between 2013 and 2016, the rate at which babies in Rock County were born with opioids in their systems more than tripled. By the time those children reached grade school, teachers and officers were looking at classrooms where the shared experience wasn’t a sport or a neighborhood—it was having a parent who overdosed.

Those children were not alive when the factory closed. They never worked there. They never made a “mistake.” They arrived into conditions that were already degraded—and the individual model has nothing to say about them except to wait until they’re old enough to diagnose one at a time.

“Learning disability. Next!”

“This one has behavioral issues.”

“That’s developmental delay, if I’ve ever seen it.”

A thousand individual files in a thousand individual offices carried one cause that nobody wrote on any of the forms.

When a factory closes and a whole generation of children is born with heroin in their systems, that’s not a mistake. That’s a splat. A loud one.

The encounter architecture that opened futures in that town was removed, and the consequences seeped into the bodies of children who hadn’t even been conceived.

…

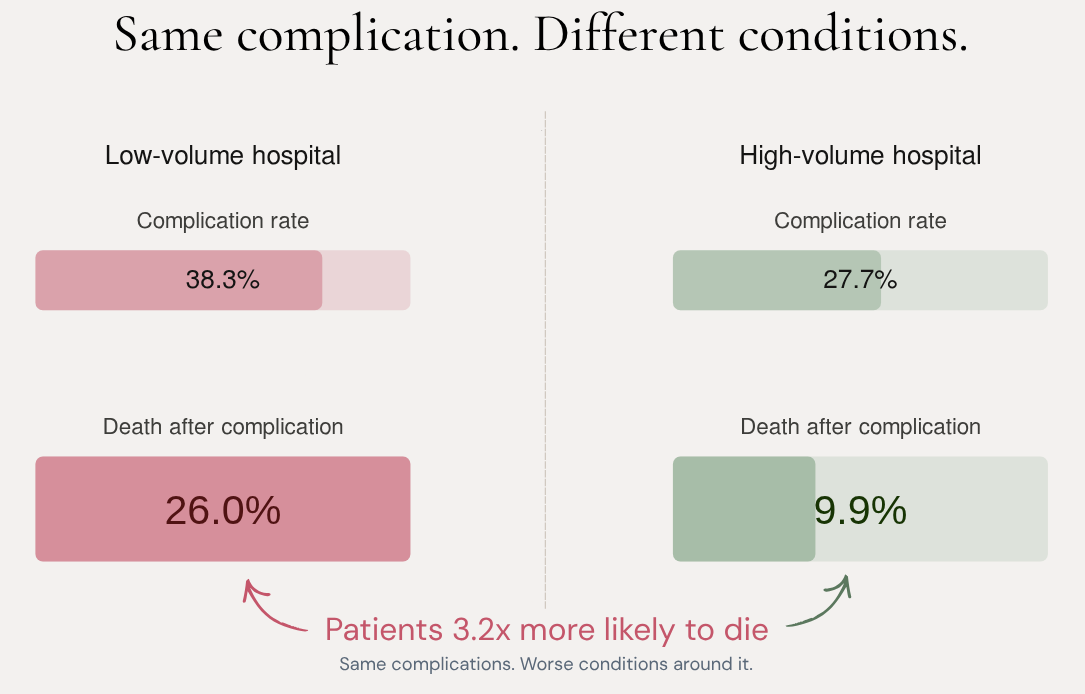

The distinction sharpens further in clinical data. Research on surgical outcomes shows that low-volume hospitals and high-volume hospitals have nearly identical rates of initial complications. The same mistake rate.

But a patient at a low-volume hospital is nearly three times more likely to die from that complication2.

The high-volume hospital didn’t have better surgeons. It had more encounters with that specific complication—more pattern recognition, more coordinated response, more trust between people who’d handled this together before. The conditions around the mistake determined whether the patient lived or died.

Same complication. Different conditions. Three times the mortality. That’s not a personnel problem. That’s a splat.

Are we still firing pilots?

For decades, when a plane crashed, investigations defaulted to “pilot error.” The system was fine. The human failed. Fire the bad pilot. Train the next one better.

Then the industry made a structural shift. They stopped treating human error as a cause and started treating it as a symptom of trouble deeper in the system’s design. They stopped blaming pilots and started redesigning cockpits3.

Flying became the safest mode of transport on earth. Not because pilots got better. Because the conditions around them did.

…

Most startups fail. It’s as common as saying, “It’s a nice day.” Ninety percent don’t make it, and we write postmortems about the founder—wrong market, wrong timing, couldn’t execute, ran out of runway. The diagnosis always lands on the person or the decision.

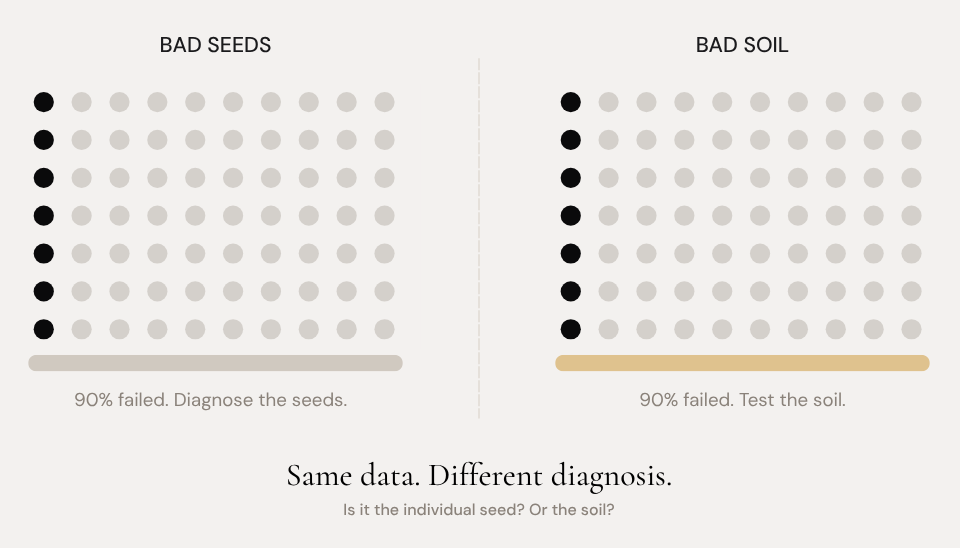

But if you planted a hundred seeds in a garden and ninety of them died, you wouldn’t conclude that ninety percent of seeds are bad seeds, would you? You’d test the soil.

But we don’t. We test the founder. We test the student. We test the employee. Most people don’t self-actualize. Most communities decline. We accept these rates as facts about the world—as features of human nature. Accepting them feels like realism. Like maturity. Like knowing how things work.

But what if it’s not realism? What if it’s Youngstown at civilizational scale—a thousand “different” failures with a thousand “different” explanations, all downstream of conditions nobody’s measuring?

What if the ninety percent is actually a soil problem?

Why the “individual” model persists

Here’s the problem with the individual diagnosis: it’s not wrong. It’s not even incomplete in any way that feels incomplete. It’s the last visible step in a causal chain whose earlier steps stay invisible.

Start at the opioid user picking up the drug. Observable. Walk it back one step—human drive with nowhere to go. Then another step—the encounters that would have opened futures no longer exist. And another—the factory that held the architecture closed. And continue—a corporate decision made in a boardroom in another state. Each step has its own true explanation. Each one closes a case.

That’s not a wrong diagnosis. It’s a misattribution that feels like a correct one because the individual is always in the frame and the underlying architecture never is. We don’t misattribute because we’re careless. We misattribute because the visible actor is always available as an easy explanation. Yet, the structural cause is never available, and “available” beats “accurate” every time you need an answer.

The result: each case gets solved. A thousand solved cases never aggregate into a pattern. The system that produced the failures never gets examined because every failure has already been accounted for.

Systemic blindness isn’t incuriosity. It’s the natural consequence of a causal chain in which every step has its own sufficient explanation.

…

Most of the time, nobody designs for this. It’s just how diagnosis works when the structural layer is invisible. But once you see that individual explanations close cases automatically and that they’re always available, always sufficient, always easier—you also see why someone with something to protect might lean into that.

In the 1950s, as evidence mounted that cigarettes caused cancer, the tobacco industry engineered the individual narrative. “The risks are generally known. She chose to smoke.”

One sentence shifted the blame to personal choice. The systemic cause—an addictive product designed for dependency, marketed to children, backed by decades of suppressed research—disappeared behind the sufficiency of that sentence.

That wasn’t a cognitive bias. That was a product. Individual sufficiency—engineered, manufactured, sold. The individual story deployed as a defense against systemic diagnosis.

Who else profits from keeping the pattern invisible?

The formal hypothesis—that potential emerges at boundaries between organized systems—is published as a preprint with falsification criteria. If you want the mechanism behind this post, start here.

The claims in this series are testable. If the evidence breaks them, we’ll report that. The prediction ledger is public.

Dogs don’t eat other dogs, by the way.

From Hospital Volume and Failure to Rescue With High-Risk Surgery, by Amir A. Ghaferi, MD, MS, John D. Birkmeyer, MD, and Justin B. Dimick, MD, MPH

Dekker, S. (2014). The Field Guide to Understanding ‘Human Error.’ 3rd ed. Ashgate/CRC Press.