The car that won’t start

A car making strange noises is struggling. It needs attention—maybe repair, maybe just rest. But it’s still running. You don’t abandon it; you fix it.

A car that won’t start has failed. No amount of pressing the accelerator helps. Pumping the gas just floods the engine. Interventions that could help a struggling car probably make a failed car worse.

The same distinction applies to regimes when patterns of operation have stopped working. Some are struggling and need support. Some have failed and need to be let go.

Misdiagnosis is expensive. Supporting a failed regime wastes resources on something that can’t recover. Abandoning a struggling regime destroys something that could have been improved.

How do you tell the difference?

The persistence question

When initiatives fizzle or strategies stop working, what do you do?

Should you persist? Maybe it needs more time. More resources. Better execution. Many successes came from pushing through initial failure.

Or should you let go? Maybe it’s not a resource problem. Maybe the approach itself has failed. Persisting would just burn more resources on something that can’t convert.

This isn’t a judgment call. It can be measured.

Regime failure defined

A regime fails when demand exceeds regulatory capacity.

In plain terms, when the world asks more than the regime can reliably handle.

Regulatory capacity is a system’s ability to handle variation and maintain function. Think of it as shock absorption—how much can hit the system before it loses its pattern?

Regulatory capacity is SIRF expressed as resilience: Sensing to detect problems early, structure to absorb impact, relationships to coordinate response, energy to do the work.

Demand is what the environment requires.

Challenges, changes, requirements, and pressure. Demand isn’t fixed—it fluctuates. A regime that handles normal demand might fail under surge demand. A regime that handled last year’s demand might fail under this year’s.

When demand exceeds capacity, the system can’t regulate. It can’t maintain the pattern. The regime is failing—whether anyone has acknowledged it or not.

Wilting plants need water. A plant with root rot won’t recover, no matter how much water you give it. The difference is whether the regulatory mechanism itself still works. Can the roots still uptake? Can the cells still process? If yes, add resources. If no, adding resources just accelerates decay—you’re feeding the rot.

Early warning signals

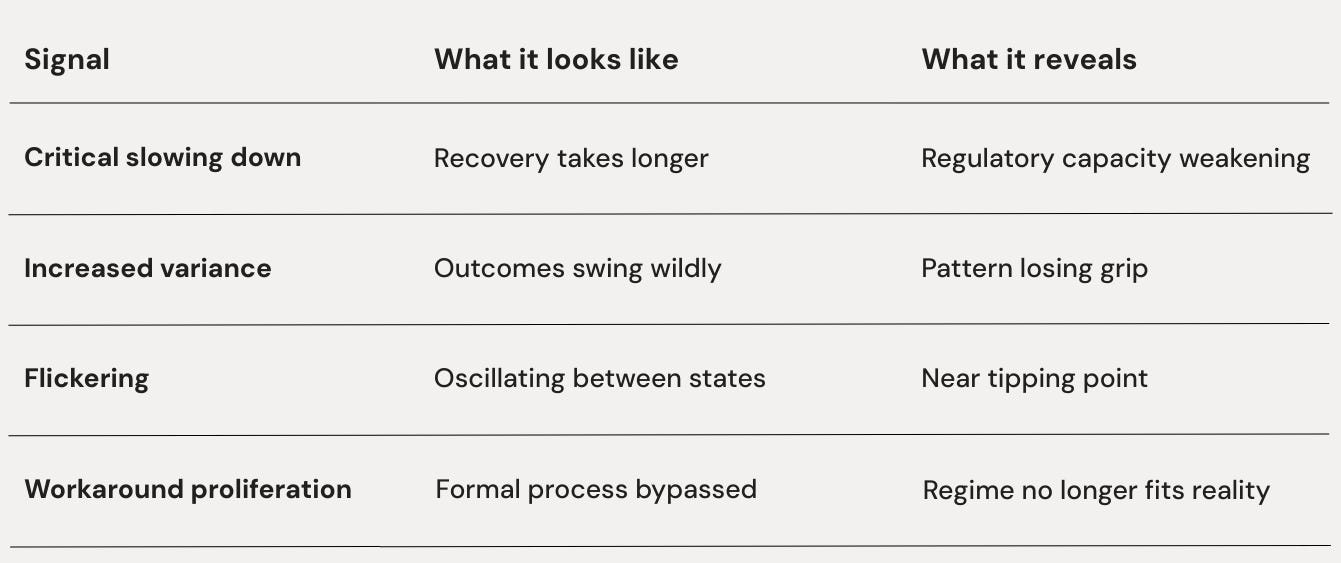

The physics of regime failure produces detectable signals before collapse. These aren’t hunches or intuitions. They’re measurable patterns that show up across domains—ecosystems, markets, organisms, organizations. The science of critical transitions has mapped them:

Critical slowing down

Recovery takes longer. When disturbed, the system used to bounce back quickly. Now it takes longer to return to normal. Each perturbation leaves a longer shadow.

Like a healthy person who shakes off a cold in two days versus an immune-compromised system that takes two weeks. Same cold. Different recovery time. The recovery time reveals the system’s regulatory capacity—how much reserve it has to restore equilibrium.

Increased variance

Outcomes swing wildly. Where the system used to produce consistent results, now there’s unpredictability. Good days and bad days. Great months and terrible months. The variance itself is the signal.

Think of a car that sometimes starts fine and sometimes won’t start at all. If it failed consistently, you’d know it’s broken. The inconsistency is what confuses people.

”It worked yesterday!”

But inconsistency is the signal.

Flickering

Oscillation between states. The system briefly shifts to a different pattern, then snaps back. Then shifts again. It’s sampling alternatives, unable to commit to either.

Like someone who quits smoking, relapses, quits again, and relapses again. The flickering between states signals proximity to a tipping point—the system will eventually lock into one regime or the other. Flickering indicates the transition or reversion is close.

Workarounds proliferate

Formal process get bypassed. The official way of doing things no longer matches the actual way. Workarounds multiply. The formal regime exists on paper, while everyone actually operates differently.

Like a family that maintains the fiction of “Sunday dinner together” when everyone actually eats separately. Or a company whose official approval process is knowingly bypassed because it’s too slow. Workarounds reveal that a regime no longer fits reality. It hasn’t been formally abandoned, but it’s already been practically replaced.

These signals often appear together.

A system showing one is worth watching. A system showing three or four is already transitioning—the only question is whether anyone has noticed.

Three ancestors

This understanding comes from multiple fields converging on the same physics:

Ashby showed that control requires variety. A system can only regulate what it can match in complexity. When disturbance exceeds the system’s variety, control fails. This is the Law of Requisite Variety—and it explains why regimes fail when demand outgrows them.

A thermostat can regulate temperature within a range. Move outside that range, and it can’t keep up—switching constantly but never achieving stability. The thermostat hasn’t broken. It’s been exceeded. Its regulatory variety is smaller than the demand variety.

Same physics applies to a manager who could handle a five-person team but drowns with fifteen, or a business model that worked in a stable market but fails in a volatile one.

Wiener showed that feedback loops can saturate. Push any feedback system beyond its operating range, and it fails to regulate. The feedback is still happening—signals still flow, responses still fire—but stability becomes impossible. The system oscillates, overcompensates, or locks up.

This is why “trying harder” often fails. The feedback loop is already maxed out. More effort just produces more oscillation.

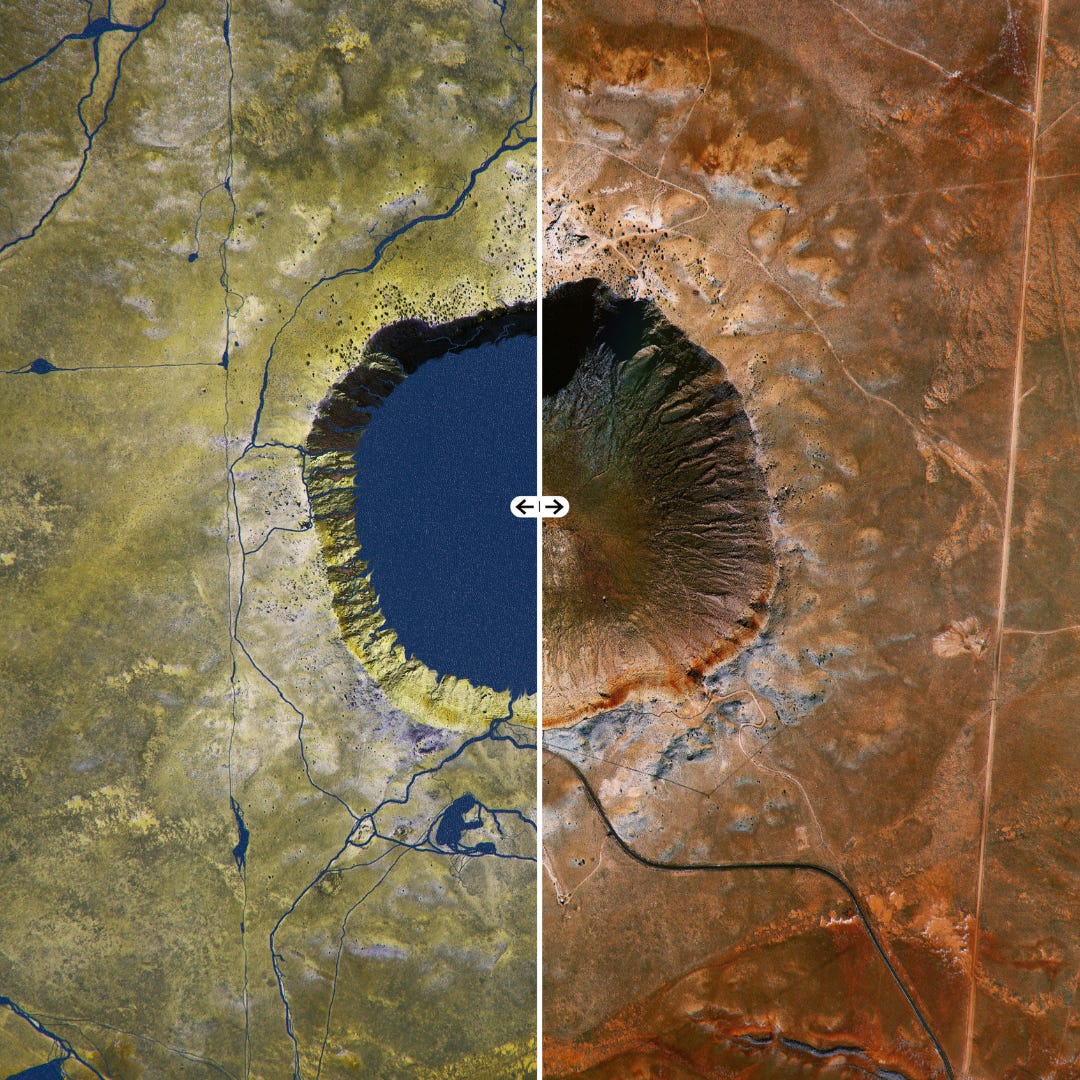

Scheffer (and others studying critical transitions) showed that regime shifts have early warning signals. Lakes eutrophying, climate systems tipping, markets crashing, populations collapsing—all show critical slowing down and increased variance before the transition. The signals are generic. They appear in any system approaching a tipping point because they reflect the underlying mathematics of stability loss.

These aren’t just ecological phenomena. They’re physics. The same signals that predict a lake flipping to algae dominance predict a company flipping to dysfunction.

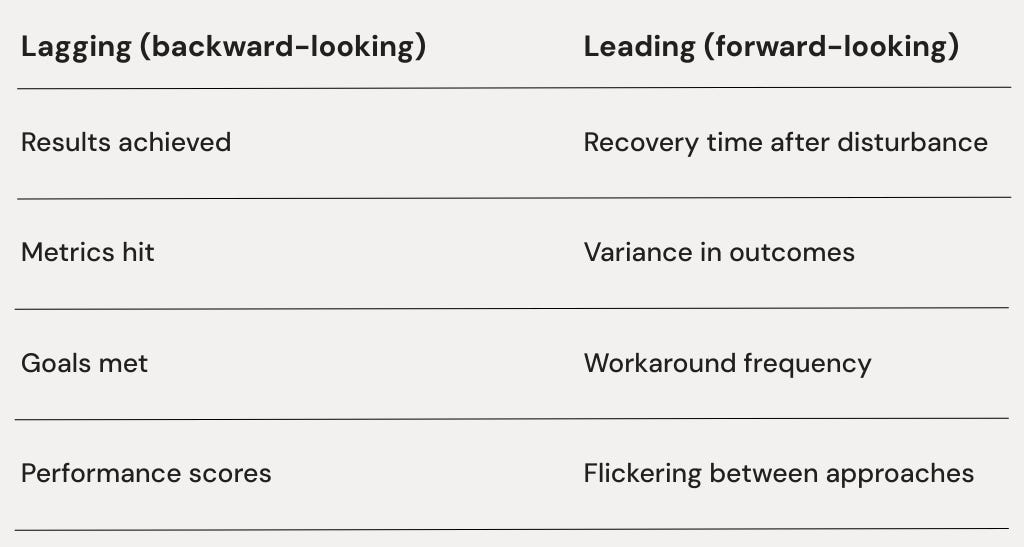

Lagging vs. leading

Most measurement systems are backward-looking. Results, revenue, performance metrics—these tell you how the regime performed. Past tense.

The early warning signals are forward-looking. They tell you whether the regime is about to fail. Future tense.

By the time lagging indicators show failure, that regime has already failed. The transition is underway, whether you’re ready or not. This is why so many failures feel “sudden”—the backward-looking dashboard stayed green until the moment of collapse.

Leading indicators give a warning. They buy time—time to build up SIRF for the transition, time to identify the new configuration, time to make the breakdown generative rather than destructive.

The information exists. Most systems just don’t track it.

Stressed vs. failed

When a regime shows strain, there are two possibilities:

A stressed regime means it’s struggling but still regulating. Recovery time is longer than ideal but stable. Variance is elevated but bounded. Workarounds exist but haven’t become the norm. The pattern is working hard, but it’s working.

Stressed regimes respond to support. Add resources, remove obstacles, reduce demand—and the signals should improve. Recovery time shortens. Variance decreases. The regime stabilizes.A failed regime means it’s no longer regulating. Recovery time keeps lengthening no matter what. Variance is unbounded—outcomes are essentially random. Workarounds have become the actual operating system while the official regime is theater.

Failed regimes don’t respond to support. Add resources and they disappear into the void. Remove obstacles and new ones appear. The signals don’t improve because the regulatory mechanism itself is broken. You’re watering a plant with dead roots.

The test is support response. Stressed regimes recover more easily when helped. Failed regimes consume help without recovering. The resources go into maintaining the fiction, feeding the workarounds, and lining the pockets of whoever benefits from the confusion, but not into restoring function.

This connects to the alignment gap from Series 2.

A failing regime shows widening divergence between stated and actual. What you say you’re doing increasingly doesn’t match what’s actually happening. The workarounds are the actual; the official process is the stated.

When the gap becomes systematic and permanent, the regime has failed. It just hasn’t been acknowledged.

Why organizations miss the signals

The signals exist. Why don’t organizations see regime failure coming?

Lagging indicator obsession

Dashboards show results, which reveal failure late. By the time results collapse, the regime failed months or years ago.

The dashboard was green until the moment it went red.

Workaround normalization

Workarounds become “how we really do things here.” Nobody tracks them as signals of regime failure. They’re absorbed into culture, even celebrated as “flexibility” or “entrepreneurial spirit.”

The systematic bypass of official process becomes invisible.

Recovery time invisibility

Nobody measures how long it takes to recover from a disturbance. It’s not on the dashboard. So critical slowing down goes unnoticed.

“We handled it” becomes the story—even when “handling it” took three times longer than last year.

Variance dismissal

“We had a bad quarter.”

“That was an unusual situation.”

“Those were special circumstances.”

Variance is explained away case by case rather than recognized as a pattern.

Each swing has a story; the story obscures the signal.

Regime defense

People benefit from the current regime—status, resources, identity. Acknowledging failure threatens those benefits. So evidence gets filtered, reinterpreted, or ignored. The regime’s defenders are also its diagnosticians. The conflict of interest is more structural than personal.

Physics doesn’t care. The signals accumulate whether or not anyone is watching. Regime failure arrives on its own schedule. The only question is whether it arrives as a “surprise.”

What comes next

Knowing a regime has failed doesn’t tell you what comes next. The system needs a new configuration—but which one? How do new regimes form? What makes some transitions smooth and others catastrophic?

That’s the next post.

Application

Notice: Pick one area where you’re debating: persist or abandon?

Name: Which signal is strongest—recovery time lengthening, variance increasing, flickering between approaches, workarounds multiplying?

Test: If it’s stressed, added support should reduce these signals within weeks. If it’s failed, added support will mostly increase the cost of maintaining the fiction.

Keep in mind: Regime failure is measurable. The early warning signals—slowing recovery, rising variance, flickering, workaround proliferation—are leading indicators that predict transition before lagging indicators show collapse. Stressed regimes respond to support. Failed regimes consume it.

The science

Established:

Critical slowing down predicts transitions (Scheffer et al., validated in ecological, climate, and financial systems)

Early warning signals have predictive value across domains

Requisite variety limits control (Ashby, foundational cybernetics)

Feedback saturation causes control failure (Wiener, foundational cybernetics)

Genesis claim:

The same signals that predict ecological and physical transitions predict organizational and personal regime failure

Falsification:

Early warning signals should predict regime transition. If organizational transitions occur randomly with respect to these signals—if stressed and failed regimes transition at equal rates regardless of signal strength—the framework fails.