The expensive brain

The brain is about 2% of body weight. It consumes approximately 20% of your energy.

That’s not inefficiency. That’s the cost of knowing.

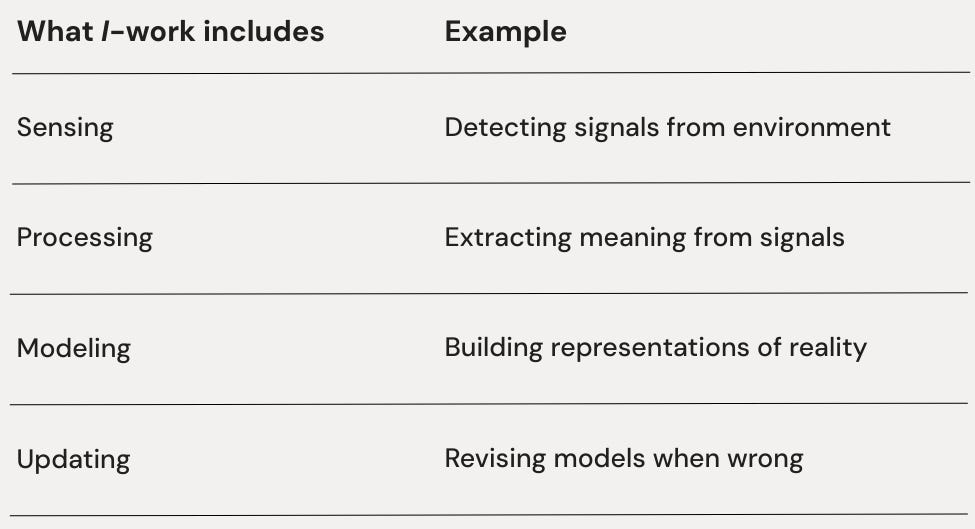

Your brain is doing something expensive: sensing the environment, processing signals, building models of reality, updating those models when they’re wrong. This work requires energy—a lot of it.

And it’s not optional. A brain that stops doing this work doesn’t just become less intelligent. It fails to guide the organism. The body with plenty of energy and intact structure walks off a cliff because it didn’t see the cliff.

Information work is expensive because information is physical. Computation costs energy. Models require maintenance. It turns out that knowing isn’t free.

What knowing requires

When we say a system “knows” something, we mean it has a model—an internal representation that tracks an external state. The model might be simple (e.g., thermostat tracking temperature) or complex (e.g., human tracking of social dynamics).

Either way, it requires work.

Each of these costs energy. Each can fail independently.

Sensing requires receptors, attention, and access to a signal. Miss the signal, and you can’t know.

Processing requires computation, filtering, and interpretation. Receive the signal but fail to process it, and you still don’t know.

Modeling requires memory, pattern recognition, and representation. Process information but fail to integrate it into a model, and you can’t act on what you know.

Updating requires recognizing errors and revising. Have a model but fail to update it, and you know something that’s no longer true.

Most I-failures aren’t due to a lack of data. They’re a refusal or an inability to update.

A company can have dashboards (sensing), analytics teams (processing), and strategic plans (modeling), but if the strategy doesn’t update when evidence contradicts it, the updating function has failed. All other I-work becomes theater.

I-work is the continuous effort of sensing, processing, modeling, and updating.

Lineage

Shannon: Information is quantifiable, physical. Channels have finite capacity. Noise degrades signal.

Landauer: Computation has thermodynamic cost. Erasing a bit requires minimum energy—physics, not engineering.

Kolmogorov: Some patterns are irreducibly complex. Complexity has a floor; some models are inherently expensive.

Pattern: information work is physical work. It requires energy, has costs, can fail for lack of resources.

The model maintenance problem

Here’s something counterintuitive: better models cost more.

A simple model is cheap to maintain. “Hot things are dangerous” doesn’t require much updating. It’s often wrong (not all hot things are dangerous; some dangerous things aren’t hot), but it’s cheap.

A complex model is expensive. Tracking exactly which things are dangerous under which circumstances requires more sensing (gathering detailed information), more processing (integrating nuance), more storage (remembering distinctions), and more updating (revising when new cases appear).

Accuracy costs figure in. This is why organisms satisfice rather than optimize—good-enough models that are cheap beat perfect models that are too expensive to maintain.

For organizations, this creates a tension. Simple models miss important nuance. Complex models consume resources that could go elsewhere. Every organization implicitly chooses how much to spend on knowledge.

Across scales

I-work looks different at different scales:

Cell: Receptor signaling, gene regulation. Cells sense their environment through surface receptors, process signals through signaling cascades, and maintain models of gene-expression states.

Cancer is partly an I-failure—cells stop responding appropriately to signals.

Individual: Attention, learning, memory. You sense through perception, process through cognition, model through memory and belief, and update through learning.

Mental fatigue is often I-depletion—you’ve run out of resources for cognitive work.

Team: Communication systems, shared mental models. Teams sense through information-gathering practices, process through discussion, model through shared understanding, and update through feedback.

Miscommunication is I-failure—the team’s information systems aren’t working.

Organization: Data infrastructure and decision-support systems. Organizations sense through metrics, process through analysis, model through strategy, and update through review cycles.

“Flying blind” is organizational I-failure.

Civilization: Media, education, and research institutions. Civilizations sense through journalism and science, process through education and public discourse, model through shared cultural knowledge, and update through institutional learning.

Disinformation is civilizational I-failure.

I vs. F vs. S

How is I different from F and S?

F provides energy flow. Without F, there’s nothing to power information work.

S provides structure. Without S, there’s no stable system to have knowledge.

I provides awareness. Without I, the system has energy and structure but doesn’t know what’s happening.

A system with F and S but no I is like a well-resourced, well-organized blind person walking toward a cliff. The resources are there. The structure is there. Knowledge of the cliff is lacking.

Many organizational failures are I-failures. The company had money (F). The structure was fine (S). But they didn’t see the market shift, the competitor move, the technology change. They weren’t sensing, processing, modeling, or updating effectively.

The trap of seeming to know

There’s a failure mode specific to I-work: maintaining models that are wrong.

A system can invest substantial resources in sensing, processing, and modeling, and still be wrong. The models get out of sync with reality. The system “knows” things that aren’t true.

This is worse than not knowing. Not knowing is honest ignorance. Maintaining a false model is a confident error. The system acts on its model, the model is wrong, and the actions produce unexpected results.

We’ll explore this deeply in Series 4 (the model-reality gap). For now, notice: I-work isn’t just about having models. It’s about having models that track reality. The updating part—revising when wrong—is where I-work often fails.

Three functions down

We now have:

F-work: Energy throughput—acquiring, storing, allocating resources

S-work: Structural maintenance—boundaries, constraints, patterns

I-work: Information work—sensing, processing, modeling, updating

F keeps things from dissolving. S determines what stays organized. I enables awareness.

But there’s still something missing. A system with F, S, and I can still die. How? By closing itself off. No matter how much energy, structure, and information a system has, if it can’t exchange with its environment, it suffocates.

What keeps systems connected?

Application

Notice: Pick one domain where you’re surprised by outcomes—work, health, market, relationships.

Name: Which layer is failing: sensing (not detecting signals), processing (not extracting meaning), modeling (not building representations), or updating (not revising when wrong)?

Test: If you improved only that layer, would prediction accuracy improve within 2–4 weeks?

Keep in mind: Knowing requires continuous work—sensing, processing, modeling, updating. Each costs energy. Most I-failures aren’t a lack of data; they’re refusal or inability to update.

The science

Established:

Computation has a minimum energy cost. This is Landauer’s principle, experimentally verified.

Channel capacity limits information transfer. This is Shannon, foundational to information theory.

Genesis claim:

I as one of four necessary work-functions for organized complexity.

Falsification:

I-work reduction should predict sensing/modeling degradation independent of F and S. Systems that maintain F and S but neglect I should lose awareness.